PER User's Guide New Features

We are redesigning and expanding the PER User's Guide! Below is a description of the new features that will be live this summer:

Home Page (DUE 1245490)

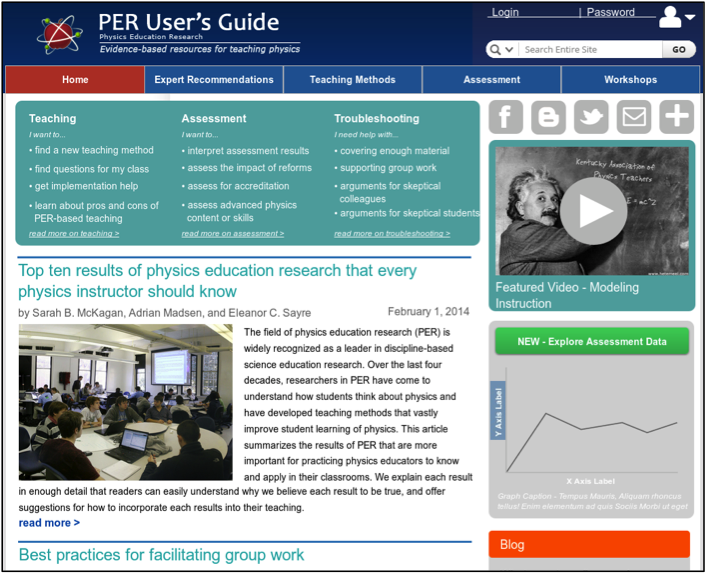

Our site redesign includes a new home page (Fig. 1), which more clearly communicates to users what the site is about and what kinds of things they can do there. In particular, the jump bar at the top of the page lists the most popular things that users want to do on the site, in their own words, as identified in interviews. Preliminary user testing suggests that the jump bar is very compelling to users and effective in conveying what the site is all about. The new homepage also includes a sidebar that allows us to feature aspects of the site that we want to highlight to users, such as the new data explorer and blog.

Our site redesign includes a new home page (Fig. 1), which more clearly communicates to users what the site is about and what kinds of things they can do there. In particular, the jump bar at the top of the page lists the most popular things that users want to do on the site, in their own words, as identified in interviews. Preliminary user testing suggests that the jump bar is very compelling to users and effective in conveying what the site is all about. The new homepage also includes a sidebar that allows us to feature aspects of the site that we want to highlight to users, such as the new data explorer and blog.

Fig. 1: Design for new home page

Teaching Method detail pages and implementation guides (DUE 1245490)

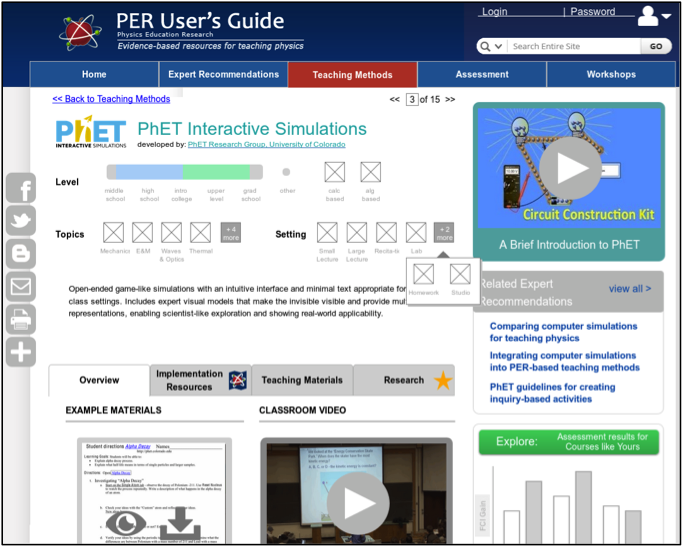

Based on faculty interviews and user testing of the teaching method overviews and implementation guides on the pilot site, we made significant changes to these pages, improving the visual display, refining content (Fig. 2), and adding tabs that more clearly communicate what information is available for users to learn more.

Based on faculty interviews and user testing of the teaching method overviews and implementation guides on the pilot site, we made significant changes to these pages, improving the visual display, refining content (Fig. 2), and adding tabs that more clearly communicate what information is available for users to learn more.

The first and third tabs prominently display example materials, and when available, other teaching materials. These features were added based on our faculty interviews, which showed that teaching materials are one of the most important features for faculty.

Fig. 2: Design for new teaching method detail page

Assessment instrument detail pages and implementation guides (DUE 1256352)

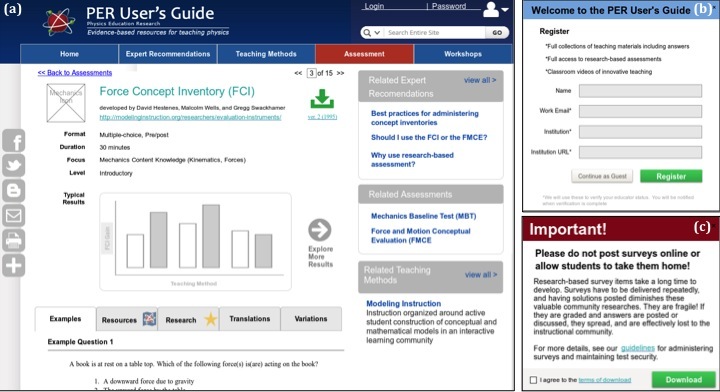

A major new feature of the expanded site is information about research-based assessment. Based on faculty interviews about their assessment needs, we designed an assessment detail page (Fig. 3a) similar to the teaching method detail page (Fig. 3). The new site will include pages for over 50 research-based assessment instruments, such as the Force Concept Inventory (FCI), Force and Motion Conceptual Evaluation (FMCE), and the Colorado Learning and Attitudes about Science Survey (CLASS).

A major new feature of the expanded site is information about research-based assessment. Based on faculty interviews about their assessment needs, we designed an assessment detail page (Fig. 3a) similar to the teaching method detail page (Fig. 3). The new site will include pages for over 50 research-based assessment instruments, such as the Force Concept Inventory (FCI), Force and Motion Conceptual Evaluation (FMCE), and the Colorado Learning and Attitudes about Science Survey (CLASS).

Fig. 3: (a) Designs for new assessment detail page; (b) registration pop-up; (c) security terms pop-up

Secure access to assessment instruments (DUE 1256352)

With permission from instrument developers, we will host assessment instruments and allow verified educators to download them, along with implementation guides, by clicking on the green download button in Fig. 3a. To address concerns from researchers and instrument developers that students accessing the instruments could compromise the validity of research results, we have designed a system to keep instruments secure: Users must register and be verified as an educator by an AAPT staff member (Fig. 3b) and agree to terms including not posting surveys online or allowing students to take them home (Fig. 3c). We are refining this system through consultation with the American Modeling Teachers’ Association, which owns the FCI, and with other instrument developers.

Assessment instrument browse pages (DUE 1256352)

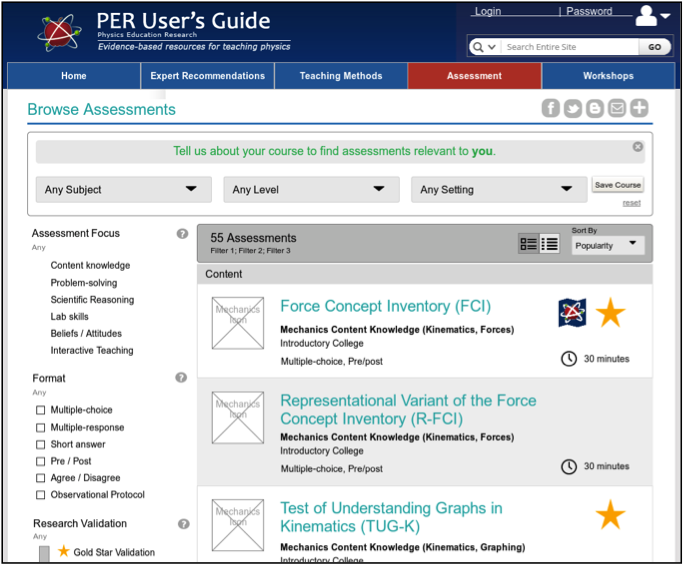

We have designed a new assessment instrument browse page (Fig. 4), similar to the current home page for browsing teaching methods. Both browse pages will have several new features based on interviews, including a course filter for users to search for teaching methods or assessments for a specific course, and to save that course for future searches. The course filter is also integrated into the new data explorer (see below), so course information saved here can be applied to assessment data that faculty upload for that course.

We have designed a new assessment instrument browse page (Fig. 4), similar to the current home page for browsing teaching methods. Both browse pages will have several new features based on interviews, including a course filter for users to search for teaching methods or assessments for a specific course, and to save that course for future searches. The course filter is also integrated into the new data explorer (see below), so course information saved here can be applied to assessment data that faculty upload for that course.

Fig. 4: Design for new assessment browse page

Expert Recommendations (DUE 1245490)

In interviews, we found that in addition to unbiased information about individual teaching methods and assessment instruments, faculty wanted expert recommendations about what to do with these tools, how they compare, and how people use them in real life. To address this need, we created a new area of the site featuring expert recommendations in the form of brief articles written by PER User’s Guide staff and other PER experts on topics including: Top 10 results of physics education research that every physics instructor should know; Best practices for layout of interactive classrooms; Best practices for administering concept inventories; Best practices for assessing your own teaching. In user testing interviews, we found that faculty were particularly excited about this area of the site.

Online video workshops (DUE 1323699, 1245490)

Another new section of the site features two sets of online video workshops developed by our collaborators: (1) Scherr, the developer on the Video Resource for Learning Assistant (LA) Development, is expanding this resource to create Periscope (DUE 1323699), a collection of 30 workshops featuring short, compelling video episodes of classrooms using research-based teaching methods, accompanied by captions, transcript, excerpts from instructional materials, and targeted discussion questions to help TAs, LAs, and faculty explore the principles and values that inform instructor and student behavior. (2) Chasteen, the developer of the University of Colorado Clicker videos, videotaped the most recent Workshop for New Faculty in Physics and Astronomy, a popular and successful workshop for introducing new faculty to PER-based teaching methods through live presentations by leaders in PER. She is creating a Virtual New Faculty Workshop (DUE 1245490), combining live screen capture of presentations with video of presenters to make virtual presentations for faculty who cannot attend the workshop (or who did attend the workshop and want a refresher). Faculty in our user testing interviews, especially those in the seeker category, were enthusiastic about this new section of the site.

Landing pages for assessment and teaching (DUE 1245490)

We have designed landing pages for the teaching methods and assessment sections of the site, each of which emphasizes the variety of resources available on these topics, featuring relevant expert recommendations along with the data explorer and teaching method and assessment browse pages.

Assessment data explorer, uploader, and visualizer (DUE 1347728)

The largest component of the new site is a national database of research-based assessment results and an accompanying large-scale interactive data explorer to visualize and perform intuitive one-click analyses (Fig. 5). This tool will house de-identified student assessment results from a wide variety of research-based assessments including conceptual assessments and surveys of attitudes and beliefs.

The largest component of the new site is a national database of research-based assessment results and an accompanying large-scale interactive data explorer to visualize and perform intuitive one-click analyses (Fig. 5). This tool will house de-identified student assessment results from a wide variety of research-based assessments including conceptual assessments and surveys of attitudes and beliefs.

In faculty interviews, we found that faculty want to compare their students to their past students and to other students at similar institutions, and want a question-by-question breakdown of their assessment results. The first three tabs in our “quick analyses” do just that. In interviews with department heads and course coordinators, we found a need for comparing assessment results across instructors and over time. Our fourth “quick analyses” tab displays summary information on many datasets and allows users to choose which instructors and semesters they would like to compare.

Fig. 5: Design for new Assessment Data Explorer

The data explorer allows faculty to choose different measures to be displayed on the x- and y-axis as well as different types of graphs (histograms, distributions, etc.) We also display summaries of the statistical analyses performed to make the comparisons. In our interviews we learned that faculty are interested in robust statistical comparisons of their data, but are not sure what statistical tests to use. We display the results of the analyses, interpretation of the results and further information about the test.

In interviews faculty said that they often give research-based assessments, but don’t know how to interpret their results and use them to improve their teaching. To help with this, we offer recommendations based on their results. For example, for an instructor with very small gains from pre- to post-test, we will offer information on using basic interactive teaching techniques. For those with larger gains, we will offer suggestions based on content clusters in which students scored poorly.

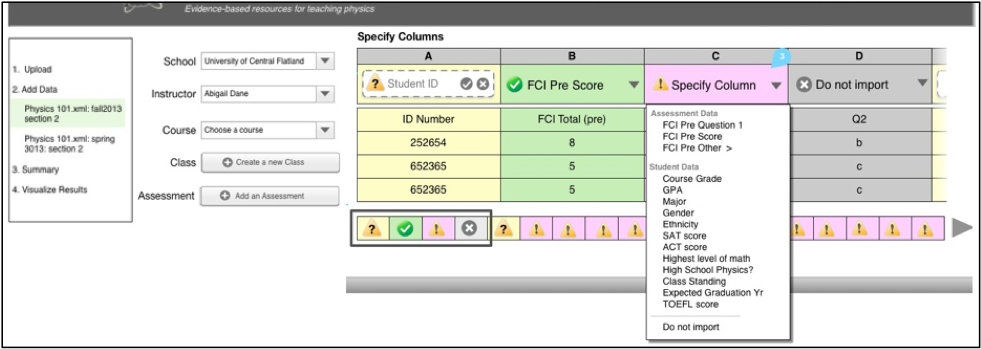

Another important feature in our system is a smooth, low-effort uploader tool to import assessment data into our system. Faculty we talked to emphasized that it is vital that the system be quick and easy to use. In response, we designed an uploader tool (Fig. 6) that guesses what data is contained in each column of a user’s spreadsheet and asks them to check the guesses. It also automatically imports information about their institution from the Carnegie Database, minimizing the fields users must input.

Fig. 6: Key elements from the uploader tool: (a) overview of steps in uploading data; (b) context of assessment; (c) the system for guessing column headings for uploaded spreadsheet